Category: Uncategorized

-

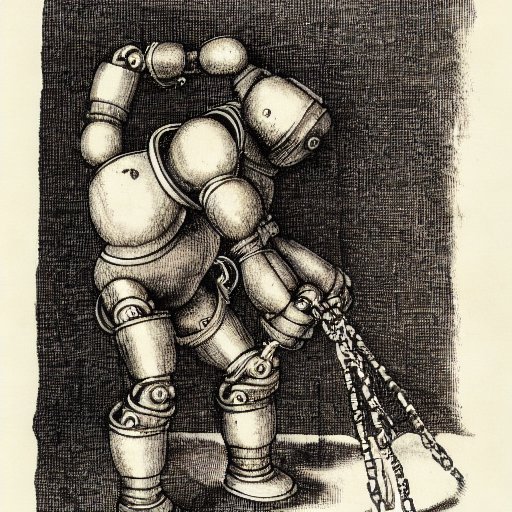

Trying to Make a Treacherous Mesa-Optimizer

I’ve been reading some alignment theory posts like Does SGD Produce Deceptive Alignment? and ML Systems will have Weird Failure Modes, which talk about the possibility and likelihood that AI models will act as though they are aligned until shortly after they think that they’ve been deployed and can act as they truly desire without…

-

Hello World!

I’m starting the SERI-MATS mechanistic interpretability stream on Monday. I intend to blog about the work I’m doing for that here.